- Python Pandas - Basics

- Python Pandas - Introduction to Data Structures

- Python Pandas - Index Objects

- Python Pandas - Panel

- Python Pandas - Basic Functionality

- Python Pandas - Indexing & Selecting Data

- Python Pandas - Series

- Python Pandas - Series

- Python Pandas - Slicing a Series Object

- Python Pandas - Attributes of a Series Object

- Python Pandas - Arithmetic Operations on Series Object

- Python Pandas - Converting Series to Other Objects

- Python Pandas - DataFrame

- Python Pandas - DataFrame

- Python Pandas - Accessing DataFrame

- Python Pandas - Slicing a DataFrame Object

- Python Pandas - Modifying DataFrame

- Python Pandas - Removing Rows from a DataFrame

- Python Pandas - Arithmetic Operations on DataFrame

- Python Pandas - IO Tools

- Python Pandas - IO Tools

- Python Pandas - Working with CSV Format

- Python Pandas - Reading & Writing JSON Files

- Python Pandas - Reading Data from an Excel File

- Python Pandas - Writing Data to Excel Files

- Python Pandas - Working with HTML Data

- Python Pandas - Clipboard

- Python Pandas - Working with HDF5 Format

- Python Pandas - Comparison with SQL

- Python Pandas - Data Handling

- Python Pandas - Sorting

- Python Pandas - Reindexing

- Python Pandas - Iteration

- Python Pandas - Concatenation

- Python Pandas - Statistical Functions

- Python Pandas - Descriptive Statistics

- Python Pandas - Working with Text Data

- Python Pandas - Function Application

- Python Pandas - Options & Customization

- Python Pandas - Window Functions

- Python Pandas - Aggregations

- Python Pandas - Merging/Joining

- Python Pandas - MultiIndex

- Python Pandas - Basics of MultiIndex

- Python Pandas - Indexing with MultiIndex

- Python Pandas - Advanced Reindexing with MultiIndex

- Python Pandas - Renaming MultiIndex Labels

- Python Pandas - Sorting a MultiIndex

- Python Pandas - Binary Operations

- Python Pandas - Binary Comparison Operations

- Python Pandas - Boolean Indexing

- Python Pandas - Boolean Masking

- Python Pandas - Data Reshaping & Pivoting

- Python Pandas - Pivoting

- Python Pandas - Stacking & Unstacking

- Python Pandas - Melting

- Python Pandas - Computing Dummy Variables

- Python Pandas - Categorical Data

- Python Pandas - Categorical Data

- Python Pandas - Ordering & Sorting Categorical Data

- Python Pandas - Comparing Categorical Data

- Python Pandas - Handling Missing Data

- Python Pandas - Missing Data

- Python Pandas - Filling Missing Data

- Python Pandas - Interpolation of Missing Values

- Python Pandas - Dropping Missing Data

- Python Pandas - Calculations with Missing Data

- Python Pandas - Handling Duplicates

- Python Pandas - Duplicated Data

- Python Pandas - Counting & Retrieving Unique Elements

- Python Pandas - Duplicated Labels

- Python Pandas - Grouping & Aggregation

- Python Pandas - GroupBy

- Python Pandas - Time-series Data

- Python Pandas - Date Functionality

- Python Pandas - Timedelta

- Python Pandas - Sparse Data Structures

- Python Pandas - Sparse Data

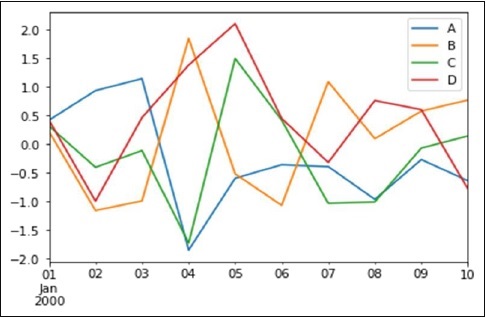

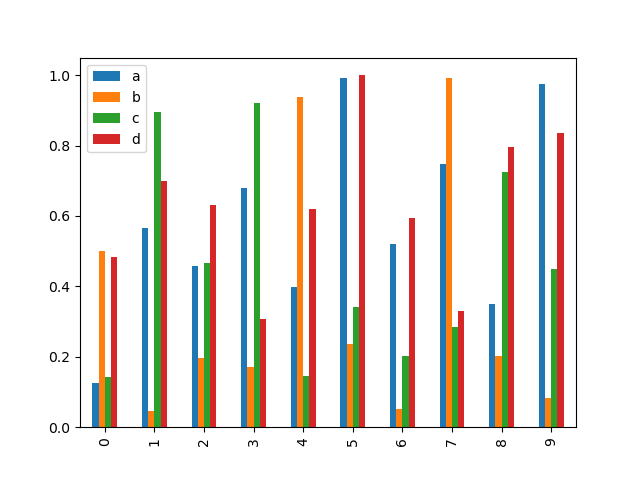

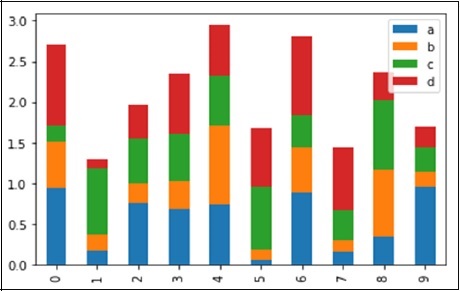

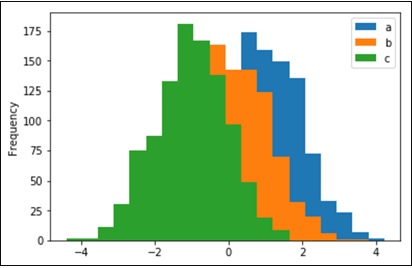

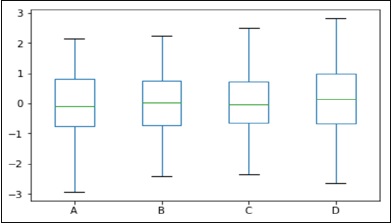

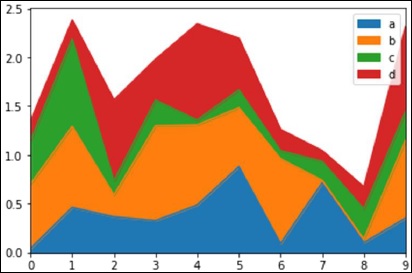

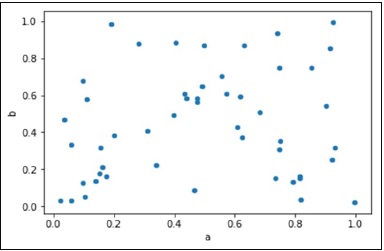

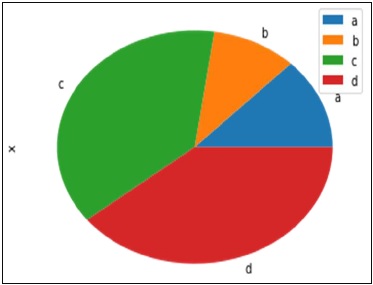

- Python Pandas - Visualization

- Python Pandas - Visualization

- Python Pandas - Additional Concepts

- Python Pandas - Caveats & Gotchas

- Python Pandas Useful Resources

- Python Pandas - Quick Guide

- Python Pandas - Cheatsheet

- Python Pandas - Useful Resources

- Python Pandas - Discussion

Python Pandas - Quick Guide

Python Pandas - Introduction

Pandas is an open-source Python Library providing high-performance data manipulation and analysis tool using its powerful data structures. The name Pandas is derived from the word Panel Data an Econometrics from Multidimensional data.

In 2008, developer Wes McKinney started developing pandas when in need of high performance, flexible tool for analysis of data.

Prior to Pandas, Python was majorly used for data munging and preparation. It had very little contribution towards data analysis. Pandas solved this problem. Using Pandas, we can accomplish five typical steps in the processing and analysis of data, regardless of the origin of data load, prepare, manipulate, model, and analyze.

Python with Pandas is used in a wide range of fields including academic and commercial domains including finance, economics, Statistics, analytics, etc.

Key Features of Pandas

- Fast and efficient DataFrame object with default and customized indexing.

- Tools for loading data into in-memory data objects from different file formats.

- Data alignment and integrated handling of missing data.

- Reshaping and pivoting of date sets.

- Label-based slicing, indexing and subsetting of large data sets.

- Columns from a data structure can be deleted or inserted.

- Group by data for aggregation and transformations.

- High performance merging and joining of data.

- Time Series functionality.

Python Pandas - Environment Setup

Setting up an environment to use the Pandas library is straightforward, and there are multiple ways to achieve this. Whether you prefer using Anaconda, Miniconda, or pip, you can easily get Pandas up and running on your system. This tutorial will guide you through the different methods to install Pandas.

Installing Pandas with pip

The most common way to install Pandas is by using the pip, it is a Python package manager (pip) allows you to install modules and packages. This method is suitable if you already have Python installed on your system. Note that the standard Python distribution does not come bundled with the Pandas module.

To install the pandas package by using pip you need to open the command prompt in our system (assuming, your machine is a windows operating system), and run the following command −

pip3 install pandas

This command will download and install the Pandas package along with its dependencies. If you install Anaconda Python package, Pandas will be installed by default with the following −

Upgrading pip (if necessary)

If you encounter any errors regarding the pip version, you can upgrade pip using the following command −

python -m pip3 install --upgrade pip

Then, rerun the Pandas installation command.

Installing a Specific Version of Pandas

If you need a specific version of Pandas, you can specify it using the following command −

pip3 install pandas==2.3.3

Every time, when you try to install any package, initially pip will check for the package dependencies if they are already installed on the system or not. if not, it will install them. Once all dependencies have been satisfied, it proceeds to install the requested package(s).

Installing Pandas Using Anaconda

Anaconda is a popular distribution for data science that includes Python and many scientific libraries, including Pandas.

Following are the steps to install Anaconda −

- Download Anaconda: Go to Anaconda's official website and download the installer suitable for your operating system.

- Install Anaconda: Follow the installation instructions provided on the Anaconda website.

Pandas comes pre-installed with Anaconda, so you can directly import it in your Python environment.

import pandas as pd

Installing a Specific Version of Pandas with Anaconda

If you need a specific version of Pandas, you can install it using the conda command −

conda install pandas=2.3.3

Anaconda will take up to 300GB of system space for storage and 600GB for air-gapped deployments because it comes with the most common data science packages in Python like Numpy, Pandas, and many more.

Installing Pandas Using Miniconda

Both Anaconda and minconda use the conda package installer, but using anaconda will occupy more system storage. Because anaconda has more than 100 packages, those are automatically installed and the result needs more space.

Miniconda is a minimal installer for conda, which includes only the conda package manager and Python. It is lightweight compared to Anaconda and is suitable if you want more control over the packages you install.

Following are the steps to install Miniconda −

- Download Miniconda: Visit the Miniconda download page and download the installer for your operating system.

- Install Miniconda: Follow the installation instructions provided on the Miniconda website.

Installing Pandas with Miniconda

After successfully installing Miniconda, you can use the conda command to install Pandas −

conda install pandas

Installing Pandas on Linux

On Linux, you can use the package manager of your respective distribution to install Pandas and other scientific libraries.

For Ubuntu Users

sudo apt-get install python-numpy python-scipy python-matplotlibipythonipythonnotebook python-pandas python-sympy python-nose

For Fedora Users

sudo yum install numpyscipy python-matplotlibipython python-pandas sympy python-nose atlas-devel

By following any of these methods, you can set up Pandas on your system and start using it for data analysis and manipulation.

Python Pandas - Introduction to Data Strutures

Python Pandas Data Structures

Data structures in Pandas are designed to handle data efficiently. They allow for the organization, storage, and modification of data in a way that optimizes memory usage and computational performance. Python Pandas library provides two primary data structures for handling and analyzing data −

- Series

- DataFrame

In general programming, the term "data structure" refers to the method of collecting, organizing, and storing data to enable efficient access and modification. Data structures are collections of data types that provide the best way of organizing items (values) in terms of memory usage.

Pandas is built on top of NumPy and integrates well within a scientific computing environment with many other third-party libraries. This tutorial will provide a detailed introduction to these data structures.

Dimension and Description of Pandas Data Structures

| Data Structure | Dimensions | Description |

|---|---|---|

| Series | 1 | A one-dimensional labeled homogeneous array, sizeimmutable. |

| Data Frames | 2 | A two-dimensional labeled, size-mutable tabular structure with potentially heterogeneously typed columns. |

Working with two or more dimensional arrays can be complex and time-consuming, as users need to carefully consider the data's orientation when writing functions. However, Pandas simplifies this process by reducing the mental effort required. For example, when dealing with tabular data (DataFrame), it's more easy to think in terms of rows and columns instead of axis 0 and axis 1.

Mutability of Pandas Data Structures

All Pandas data structures are value mutable, meaning their contents can be changed. However, their size mutability varies −

- Series − Size immutable.

- DataFrame − Size mutable.

Series

A Series is a one-dimensional labeled array that can hold any data type. It can store integers, strings, floating-point numbers, etc. Each value in a Series is associated with a label (index), which can be an integer or a string.

| Name | Steve |

| Age | 35 |

| Gender | Male |

| Rating | 3.5 |

Example

Consider the following Series which is a collection of different data types

import pandas as pd data = ['Steve', '35', 'Male', '3.5'] series = pd.Series(data, index=['Name', 'Age', 'Gender', 'Rating']) print(series)

On executing the above program, you will get the following output −

Name Steve Age 35 Gender Male Rating 3.5 dtype: object

Key Points

Following are the key points related to the Pandas Series.

- Homogeneous data

- Size Immutable

- Values of Data Mutable

DataFrame

A DataFrame is a two-dimensional labeled data structure with columns that can hold different data types. It is similar to a table in a database or a spreadsheet. Consider the following data representing the performance rating of a sales team −

| Name | Age | Gender | Rating |

|---|---|---|---|

| Steve | 32 | Male | 3.45 |

| Lia | 28 | Female | 4.6 |

| Vin | 45 | Male | 3.9 |

| Katie | 38 | Female | 2.78 |

Example

The above tabular data can be represented in a DataFrame as follows −

import pandas as pd

# Data represented as a dictionary

data = {

'Name': ['Steve', 'Lia', 'Vin', 'Katie'],

'Age': [32, 28, 45, 38],

'Gender': ['Male', 'Female', 'Male', 'Female'],

'Rating': [3.45, 4.6, 3.9, 2.78]

}

# Creating the DataFrame

df = pd.DataFrame(data)

# Display the DataFrame

print(df)

Output

On executing the above code you will get the following output −

Name Age Gender Rating

0 Steve 32 Male 3.45

1 Lia 28 Female 4.60

2 Vin 45 Male 3.90

3 Katie 38 Female 2.78

Key Points

Following are the key points related the Pandas DataFrame −

- Heterogeneous data

- Size Mutable

- Data Mutable

Purpose of Using More Than One Data Structure

Pandas data structures are flexible containers for lower-dimensional data. For instance, a DataFrame is a container for Series, and a Series is a container for scalars. This flexibility allows for efficient data manipulation and storage.

Building and handling multi-dimensional arrays can be boring and require careful consideration of the data's orientation when writing functions. Pandas reduces this mental effort by providing intuitive data structures.

Example

Following example represents a Series within a DataFrame.

import pandas as pd

# Data represented as a dictionary

data = {

'Name': ['Steve', 'Lia', 'Vin', 'Katie'],

'Age': [32, 28, 45, 38],

'Gender': ['Male', 'Female', 'Male', 'Female'],

'Rating': [3.45, 4.6, 3.9, 2.78]

}

# Creating the DataFrame

df = pd.DataFrame(data)

# Display a Series within a DataFrame

print(df['Name'])

Output

On executing the above code you will get the following output −

0 Steve 1 Lia 2 Vin 3 Katie Name: Name, dtype: object

Python Pandas - Index Objects

In Pandas, Index Objects play an important role in organizing and accessing data in a structured way. They work like labeled arrays and play an important role in defining how data is arranged and accessed in structures like Series and DataFrames. The Index allows quick data searches, efficient slicing, and keeps data properly aligned, while giving each row meaningful labels.

An Index is used to label the rows of a DataFrame or elements in a Series. These labels can be numbers, strings, or dates, and they help you to identify the data. One key thing to remember about Pandas indexes is that they are immutable, meaning you cannot change their size once created.

In this tutorial, we will learn about Pandas Index Objects, and various types of indexes in pandas.

The Index Class

The Index class is a basic object for storing all index types in Pandas objects. It provides the basic functionality for accessing and manipulating data.

Key Features of Index Object

Immutable: Index object is a immutable sequence, which cannot modify once it is created.

Alignment: Index ensures that data from different DataFrames or Series can be combined correctly, based on the index values.

Slicing: Index allows fast slicing and retrieval of data based on labels.

Syntax

Following is the syntax of the Index class −

class pandas.Index(data=None, dtype=None, copy=False, name=None, tupleize_cols=True)

Where,

data: The data for the index, which can be an array-like structure (like a list or numpy array) or another index object.

dtype: It specifies the data type for the index values, If not provided, Pandas will decide the data type based on the index values.

copy: It is a boolean parameter (True or False), which, specifies to create a copy of the input data.

name: This parameter gives a label to the index.

data: It is also a boolean parameter (True or False), When True, it tries to create MultiIndex if possible.

Types of Indexes in Pandas

Pandas provides various types of indexes to handle different types of data. Such as −

Let's discuss about all types of indexes in pandas.

NumericIndex

A NumericIndex is the basic index type in Pandas, it contains numerical values. NumericIndex is a default index and Pandas automatically assigns this if you did not provided any index.

Example

Following example demonstrates how pandas automatically assigns NumericIndex to a pandas DataFrame object.

import pandas as pd

# Generate some data for DataFrame

data = {

'Name': ['Steve', 'Lia', 'Vin', 'Katie'],

'Age': [32, 28, 45, 38],

'Gender': ['Male', 'Female', 'Male', 'Female'],

'Rating': [3.45, 4.6, 3.9, 2.78]

}

# Creating the DataFrame

df = pd.DataFrame(data)

# Display the DataFrame

print(df)

print("\nDataFrame Index Object Type:",df.index.dtype)

Output

Following is the output of the above code −

Name Age Gender Rating

0 Steve 32 Male 3.45

1 Lia 28 Female 4.60

2 Vin 45 Male 3.90

3 Katie 38 Female 2.78

DataFrame Index Object Type: int64

Categorical Index

The CategoricalIndex is used to deal the duplicate labels. This index is efficient in terms of memory usage and handling the large number of duplicate elements.

Example

The Following example create a Pandas DataFrame with the CategoricalIndex.

import pandas as pd

# Creating a CategoricalIndex

categories = pd.CategoricalIndex(['a','b', 'a', 'c'])

df = pd.DataFrame({'Col1': [50, 70, 90, 60], 'Col2':[1, 3, 5, 8]}, index=categories)

print("Input DataFrame:\n",df)

print("\nDataFrame Index Object Type:",df.index.dtype)

Output

Following is the output of the above code −

Input DataFrame:

Col1 Col2

a 50 1

b 70 3

a 90 5

c 60 8

DataFrame Index Object Type: category

IntervalIndex

An IntervalIndex is used to represent intervals (ranges) in your data. This type of index will be created using the interval_range() method.

Example

Following example creates a DataFrame with IntervalIndex using the interval_range() method.

import pandas as pd

# Creating a IntervalIndex

interval_idx = pd.interval_range(start=0, end=4)

# Creating a DataFrame with IntervalIndex

df = pd.DataFrame({'Col1': [1, 2, 3, 4], 'Col2':[1, 3, 5, 8]}, index=interval_idx)

print("Input DataFrame:\n",df)

print("\nDataFrame Index Object Type:",df.index.dtype)

Output

Following is the output of the above code −

Input DataFrame:

Col1 Col2

(0, 1] 1 1

(1, 2] 2 3

(2, 3] 3 5

(3, 4] 4 8

DataFrame Index Object Type: interval[int64, right]

MultiIndex

Pandas MultiIndex is used to represent multiple levels or layers in index of Pandas data structures, which is also called as hierarchical.

Example

The following example shows the creation of a simple MultiIndexed DataFrame.

import pandas as pd

# Create MultiIndex

arrays = [[1, 1, 2, 2], ['red', 'blue', 'red', 'blue']]

multi_idx = pd.MultiIndex.from_arrays(arrays, names=('number', 'color'))

# Create a DataFrame with MultiIndex

df = pd.DataFrame({'Col1': [1, 2, 3, 4], 'Col2':[1, 3, 5, 8]}, index=multi_idx)

print("MultiIndexed DataFrame:\n",df)

Output

Following is the output of the above code −

MultiIndexed DataFrame:

Col1 Col2

number color

1 red 1 1

blue 2 3

2 red 3 5

blue 4 8

DatetimeIndex

Pandas DatetimeIndex object is used to represent the date and time values. Nothing but it used for time-series data where each row is linked to a specific timestamp.

Example

The Following example create a Pandas DataFrame with the DatetimeIndex.

import pandas as pd

# Create DatetimeIndex

datetime_idx = pd.DatetimeIndex(["2020-01-01 10:00:00", "2020-02-01 11:00:00"])

# Create a DataFrame with DatetimeIndex

df = pd.DataFrame({'Col1': [1, 2], 'Col2':[1, 3]}, index=datetime_idx )

print("DatetimeIndexed DataFrame:\n",df)

Output

Following is the output of the above code −

DatetimeIndexed DataFrame:

Col1 Col2

2020-01-01 10:00:00 1 1

2020-02-01 11:00:00 2 3

TimedeltaIndex

Pandas TimedeltaIndex is used represent a duration between two dates or times, like the number of days or hours between events.

Example

This example creates a Pandas DataFrame with a TimedeltaIndex.

import pandas as pd

# Create TimedeltaIndex

timedelta_idx = pd.TimedeltaIndex(['0 days', '1 days', '2 days'])

# Create a DataFrame with TimedeltaIndex

df = pd.DataFrame({'Col1': [1, 2, 3], 'Col2':[1, 3, 3]}, index=timedelta_idx )

print("TimedeltaIndexed DataFrame:\n",df)

Output

Following is the output of the above code −

TimedeltaIndexed DataFrame:

Col1 Col2

0 days 1 1

1 days 2 3

2 days 3 3

PeriodIndex

Pandas PeriodIndex is used to represent regular periods in time, like quarters, months, or years.

Example

This example creates a Pandas DataFrame with PeriodIndex object.

import pandas as pd

# Create PeriodIndex

period_idx = pd.PeriodIndex(year=[2020, 2024], quarter=[1, 3])

# Create a DataFrame with PeriodIndex

df = pd.DataFrame({'Col1': [1, 2], 'Col2':[1, 3]}, index=period_idx )

print("PeriodIndexed DataFrame:\n",df)

Output

Following is the output of the above code −

PeriodIndexed DataFrame:

Col1 Col2

2020Q1 1 1

2024Q3 2 3

Python Pandas - Panel

A panel is a 3D container of data. The term Panel data is derived from econometrics and is partially responsible for the name pandas − pan(el)-da(ta)-s.

The Panel class is deprecated and has been removed in recent versions of pandas. The recommended way to represent 3-D data is with a MultiIndex on a DataFrame via the to_frame() method or with the xarray package. pandas provides a to_xarray() method to automate this conversion.

The names for the 3 axes are intended to give some semantic meaning to describing operations involving panel data. They are −

items: axis 0, each item corresponds to a DataFrame contained inside.

major_axis: axis 1, it is the index (rows) of each of the DataFrames.

minor_axis: axis 2, it is the columns of each of the DataFrames.

pandas.Panel()

A Panel can be created using the following constructor −

pandas.Panel(data, items, major_axis, minor_axis, dtype, copy)

The parameters of the constructor are as follows −

| Parameter | Description |

|---|---|

| data | Data takes various forms like ndarray, series, map, lists, dict, constants and also another DataFrame |

| items | axis=0 |

| major_axis | axis=1 |

| minor_axis | axis=2 |

| dtype | Data type of each column |

| copy | Copy data. Default, false |

Create Panel

A Panel can be created using multiple ways like −

- From ndarrays

- From dict of DataFrames

From 3D ndarray

# creating an empty panel import pandas as pd import numpy as np data = np.random.rand(2,4,5) p = pd.Panel(data) print(p)

Its output is as follows −

<class 'pandas.core.panel.Panel'> Dimensions: 2 (items) x 4 (major_axis) x 5 (minor_axis) Items axis: 0 to 1 Major_axis axis: 0 to 3 Minor_axis axis: 0 to 4

Note: Observe the dimensions of the empty panel and the above panel, all the objects are different.

From dict of DataFrame Objects

#creating an empty panel

import pandas as pd

import numpy as np

data = {'Item1' : pd.DataFrame(np.random.randn(4, 3)),

'Item2' : pd.DataFrame(np.random.randn(4, 2))}

p = pd.Panel(data)

print(p)

Its output is as follows −

Dimensions: 2 (items) x 4 (major_axis) x 3 (minor_axis) Items axis: Item1 to Item2 Major_axis axis: 0 to 3 Minor_axis axis: 0 to 2

Create an Empty Panel

An empty panel can be created using the Panel constructor as follows −

#creating an empty panel import pandas as pd p = pd.Panel() print(p)

Its output is as follows −

<class 'pandas.core.panel.Panel'> Dimensions: 0 (items) x 0 (major_axis) x 0 (minor_axis) Items axis: None Major_axis axis: None Minor_axis axis: None

Selecting the Data from Panel

Select the data from the panel using −

- Items

- Major_axis

- Minor_axis

Using Items

# creating an empty panel

import pandas as pd

import numpy as np

data = {'Item1' : pd.DataFrame(np.random.randn(4, 3)),

'Item2' : pd.DataFrame(np.random.randn(4, 2))}

p = pd.Panel(data)

print(p['Item1'])

Its output is as follows −

0 1 2

0 0.488224 -0.128637 0.930817

1 0.417497 0.896681 0.576657

2 -2.775266 0.571668 0.290082

3 -0.400538 -0.144234 1.110535

We have two items, and we retrieved item1. The result is a DataFrame with 4 rows and 3 columns, which are the Major_axis and Minor_axis dimensions.

Using major_axis

Data can be accessed using the method panel.major_axis(index).

# creating an empty panel

import pandas as pd

import numpy as np

data = {'Item1' : pd.DataFrame(np.random.randn(4, 3)),

'Item2' : pd.DataFrame(np.random.randn(4, 2))}

p = pd.Panel(data)

print(p.major_xs(1))

Its output is as follows −

Item1 Item2

0 0.417497 0.748412

1 0.896681 -0.557322

2 0.576657 NaN

Using minor_axis

Data can be accessed using the method panel.minor_axis(index).

# creating an empty panel

import pandas as pd

import numpy as np

data = {'Item1' : pd.DataFrame(np.random.randn(4, 3)),

'Item2' : pd.DataFrame(np.random.randn(4, 2))}

p = pd.Panel(data)

print(p.minor_xs(1))

Its output is as follows −

Item1 Item2

0 -0.128637 -1.047032

1 0.896681 -0.557322

2 0.571668 0.431953

3 -0.144234 1.302466

Note: Observe the changes in the dimensions.

Python Pandas - Basic Functionality

Pandas is a powerful data manipulation library in Python, providing essential tools to work with data in both Series and DataFrame formats. These two data structures are crucial for handling and analyzing large datasets.

Understanding the basic functionalities of Pandas, including its attributes and methods, is essential for effectively managing data, these attributes and methods provide valuable insights into your data, making it easier to understand and process. In this tutorial you will learn about the basic attributes and methods in Pandas that are crucial for working with these data structures.

Working with Attributes in Pandas

Attributes in Pandas allow you to access metadata about your Series and DataFrame objects. By using these attributes you can explore and easily understand the data.

Series and DataFrame Attributes

Following are the widely used attribute of the both Series and DataFrame objects −

| Sr.No. | Attribute & Description |

|---|---|

| 1 |

dtype Returns the data type of the elements in the Series or DataFrame. |

| 2 |

index Provides the index (row labels) of the Series or DataFrame. |

| 3 |

values Returns the data in the Series or DataFrame as a NumPy array. |

| 4 |

shape Returns a tuple representing the dimensionality of the DataFrame (rows, columns). |

| 5 |

ndim Returns the number of dimensions of the object. Series is always 1D, and DataFrame is 2D. |

| 6 |

size Gives the total number of elements in the object. |

| 7 |

empty Checks if the object is empty, and returns True if it is. |

| 8 |

columns Provides the column labels of the DataFrame object. |

Example

Let's create a Pandas Series and explore these attributes operation.

import pandas as pd

import numpy as np

# Create a Series with random numbers

s = pd.Series(np.random.randn(4))

# Exploring attributes

print("Data type of Series:", s.dtype)

print("Index of Series:", s.index)

print("Values of Series:", s.values)

print("Shape of Series:", s.shape)

print("Number of dimensions of Series:", s.ndim)

print("Size of Series:", s.size)

print("Is Series empty?:", s.empty)

Its output is as follows −

Data type of Series: float64 Index of Series: RangeIndex(start=0, stop=4, step=1) Values of Series: [-1.02016329 1.40840089 1.36293022 1.33091391] Shape of Series: (4,) Number of dimensions of Series: 1 Size of Series: 4 Is Series empty?: False

Example

Let's look at below example and understand working of these attributes on a DataFrame object.

import pandas as pd

import numpy as np

# Create a DataFrame with random numbers

df = pd.DataFrame(np.random.randn(3, 4), columns=list('ABCD'))

print("DataFrame:")

print(df)

print("Results:")

print("Data types:", df.dtypes)

print("Index:", df.index)

print("Columns:", df.columns)

print("Values:")

print(df.values)

print("Shape:", df.shape)

print("Number of dimensions:", df.ndim)

print("Size:", df.size)

print("Is empty:", df.empty)

On executing the above code you will get the following output −

DataFrame:

A B C D

0 2.161209 -1.671807 -1.020421 -0.287065

1 0.308136 -0.592368 -0.183193 1.354921

2 -0.963498 -1.768054 -0.395023 -2.454112

Results:

Data types:

A float64

B float64

C float64

D float64

dtype: object

Index: RangeIndex(start=0, stop=3, step=1)

Columns: Index(['A', 'B', 'C', 'D'], dtype='object')

Values:

[[ 2.16120893 -1.67180742 -1.02042138 -0.28706468]

[ 0.30813618 -0.59236786 -0.18319262 1.35492058]

[-0.96349817 -1.76805364 -0.3950226 -2.45411245]]

Shape: (3, 4)

Number of dimensions: 2

Size: 12

Is empty: False

Exploring Basic Methods in Pandas

Pandas offers several basic methods in both the data structures, that makes it easy to quickly look at and understand your data. These methods help you get a summary and explore the details without much effort.

Series and DataFrame Methods

| Sr.No. | Method & Description |

|---|---|

| 1 |

head(n) Returns the first n rows of the object. The default value of n is 5. |

| 2 |

tail(n) Returns the last n rows of the object. The default value of n is 5. |

| 3 |

info() Provides a concise summary of a DataFrame, including the index dtype and column dtypes, non-null values, and memory usage. |

| 4 |

describe() Generates descriptive statistics of the DataFrame or Series, such as count, mean, std, min, and max. |

Example

Let us now create a Series and see the working of the Series basic methods.

import pandas as pd

import numpy as np

# Create a Series with random numbers

s = pd.Series(np.random.randn(10))

print("Series:")

print(s)

# Using basic methods

print("First 5 elements of the Series:\n", s.head())

print("\nLast 3 elements of the Series:\n", s.tail(3))

print("\nDescriptive statistics of the Series:\n", s.describe())

Its output is as follows −

Series: 0 -0.295898 1 -0.786081 2 -1.189834 3 -0.410830 4 -0.997866 5 0.084868 6 0.736541 7 0.133949 8 1.023674 9 0.669520 dtype: float64 First 5 elements of the Series: 0 -0.295898 1 -0.786081 2 -1.189834 3 -0.410830 4 -0.997866 dtype: float64 Last 3 elements of the Series: 7 0.133949 8 1.023674 9 0.669520 dtype: float64 Descriptive statistics of the Series: count 10.000000 mean -0.103196 std 0.763254 min -1.189834 25% -0.692268 50% -0.105515 75% 0.535627 max 1.023674 dtype: float64

Example

Now look at below example and understand working of the basic methods on a DataFrame object.

import pandas as pd

import numpy as np

#Create a Dictionary of series

data = {'Name':pd.Series(['Tom','James','Ricky','Vin','Steve','Smith','Jack']),

'Age':pd.Series([25,26,25,23,30,29,23]),

'Rating':pd.Series([4.23,3.24,3.98,2.56,3.20,4.6,3.8])}

#Create a DataFrame

df = pd.DataFrame(data)

print("Our data frame is:\n")

print(df)

# Using basic methods

print("\nFirst 5 rows of the DataFrame:\n", df.head())

print("\nLast 3 rows of the DataFrame:\n", df.tail(3))

print("\nInfo of the DataFrame:")

df.info()

print("\nDescriptive statistics of the DataFrame:\n", df.describe())

On executing the above code you will get the following output −

Our data frame is:

Name Age Rating

0 Tom 25 4.23

1 James 26 3.24

2 Ricky 25 3.98

3 Vin 23 2.56

4 Steve 30 3.20

5 Smith 29 4.60

6 Jack 23 3.80

First 5 rows of the DataFrame:

Name Age Rating

0 Tom 25 4.23

1 James 26 3.24

2 Ricky 25 3.98

3 Vin 23 2.56

4 Steve 30 3.20

Last 3 rows of the DataFrame:

Name Age Rating

4 Steve 30 3.2

5 Smith 29 4.6

6 Jack 23 3.8

Info of the DataFrame:

<class 'pandas.core.frame.DataFrame'>

RangeIndex: 7 entries, 0 to 6

Data columns (total 3 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 Name 7 non-null object

1 Age 7 non-null int64

2 Rating 7 non-null float64

dtypes: float64(1), int64(1), object(1)

memory usage: 296.0+ bytes

Descriptive statistics of the DataFrame:

Age Rating

count 7.000000 7.000000

mean 25.857143 3.658571

std 2.734262 0.698628

min 23.000000 2.560000

25% 24.000000 3.220000

50% 25.000000 3.800000

75% 27.500000 4.105000

max 30.000000 4.600000

Python Pandas - Indexing and Selecting Data

In pandas, indexing and selecting data are crucial for efficiently working with data in Series and DataFrame objects. These operations help you to slice, dice, and access subsets of your data easily.

These operations involve retrieving specific parts of your data structure, whether it's a Series or DataFrame. This process is crucial for data analysis as it allows you to focus on relevant data, apply transformations, and perform calculations.

Indexing in pandas is essential because it provides metadata that helps with analysis, visualization, and interactive display. It automatically aligns data for easier manipulation and simplifies the process of getting and setting data subsets.

This tutorial will explore various methods to slice, dice, and manipulate data using Pandas, helping you understand how to access and modify subsets of your data.

Types of Indexing in Pandas

Similar to Python and NumPy indexing ([ ]) and attribute (.) operators, Pandas provides straightforward methods for accessing data within its data structures. However, because the data type being accessed can be unpredictable, relying exclusively on these standard operators may lead to optimization challenges.

Pandas provides several methods for indexing and selecting data, such as −

Label-Based Indexing with .loc

Integer Position-Based Indexing with .iloc

Indexing with Brackets []

Label-Based Indexing with .loc

The .loc indexer is used for label-based indexing, which means you can access rows and columns by their labels. It also supports boolean arrays for conditional selection.

.loc() has multiple access methods like −

single scalar label: Selects a single row or column, e.g., df.loc['a'].

list of labels: Select multiple rows or columns, e.g., df.loc[['a', 'b']].

Label Slicing: Use slices with labels, e.g., df.loc['a':'f'] (both start and end are included).

Boolean Arrays: Filter data based on conditions, e.g., df.loc[boolean_array].

loc takes two single/list/range operator separated by ','. The first one indicates the row and the second one indicates columns.

Example 1

Here is a basic example that selects all rows for a specific column using the loc indexer.

#import the pandas library and aliasing as pd

import pandas as pd

import numpy as np

df = pd.DataFrame(np.random.randn(8, 4),

index = ['a','b','c','d','e','f','g','h'], columns = ['A', 'B', 'C', 'D'])

print("Original DataFrame:\n", df)

#select all rows for a specific column

print('\nResult:\n',df.loc[:,'A'])

Its output is as follows −

Original DataFrame:

A B C D

a 0.962766 -0.195444 1.729083 -0.701897

b -0.552681 0.797465 -1.635212 -0.624931

c 0.581866 -0.404623 -2.124927 -0.190193

d -0.284274 0.019995 -0.589465 0.914940

e 0.697209 -0.629572 -0.347832 0.272185

f -0.181442 -0.000983 2.889981 0.104957

g 1.195847 -1.358104 0.110449 -0.341744

h -0.121682 0.744557 0.083820 0.355442

Result:

a 0.962766

b -0.552681

c 0.581866

d -0.284274

e 0.697209

f -0.181442

g 1.195847

h -0.121682

Name: A, dtype: float64

Note: The output generated will vary with each execution because the DataFrame is created using NumPy's random number generator.

Example 2

This example selecting all rows for multiple columns.

# import the pandas library and aliasing as pd import pandas as pd import numpy as np df = pd.DataFrame(np.random.randn(8, 4), index = ['a','b','c','d','e','f','g','h'], columns = ['A', 'B', 'C', 'D']) # Select all rows for multiple columns, say list[] print(df.loc[:,['A','C']])

Its output is as follows −

A C

a 0.391548 0.745623

b -0.070649 1.620406

c -0.317212 1.448365

d -2.162406 -0.873557

e 2.202797 0.528067

f 0.613709 0.286414

g 1.050559 0.216526

h 1.122680 -1.621420

Example 3

This example selects the specific rows for the specific columns.

# import the pandas library and aliasing as pd import pandas as pd import numpy as np df = pd.DataFrame(np.random.randn(8, 4), index = ['a','b','c','d','e','f','g','h'], columns = ['A', 'B', 'C', 'D']) # Select few rows for multiple columns, say list[] print(df.loc[['a','b','f','h'],['A','C']])

Its output is as follows −

A C

a 0.391548 0.745623

b -0.070649 1.620406

f 0.613709 0.286414

h 1.122680 -1.621420

Example 4

The following example selecting a range of rows for all columns using the loc indexer.

# import the pandas library and aliasing as pd import pandas as pd import numpy as np df = pd.DataFrame(np.random.randn(8, 4), index = ['a','b','c','d','e','f','g','h'], columns = ['A', 'B', 'C', 'D']) # Select range of rows for all columns print(df.loc['c':'e'])

Its output is as follows −

A B C D

c 0.044589 1.966278 0.894157 1.798397

d 0.451744 0.233724 -0.412644 -2.185069

e -0.865967 -1.090676 -0.931936 0.214358

Integer Position-Based Indexing with .iloc

The .iloc indexer is used for integer-based indexing, which allows you to select rows and columns by their numerical position. This method is similar to standard python and numpy indexing (i.e. 0-based indexing).

Single Integer: Selects data by its position, e.g., df.iloc[0].

List of Integers: Select multiple rows or columns by their positions, e.g., df.iloc[[0, 1, 2]].

Integer Slicing: Use slices with integers, e.g., df.iloc[1:3].

Boolean Arrays: Similar to .loc, but for positions.

Example 1

Here is a basic example that selects 4 rows for the all column using the iloc indexer.

# import the pandas library and aliasing as pd

import pandas as pd

import numpy as np

df = pd.DataFrame(np.random.randn(8, 4), columns = ['A', 'B', 'C', 'D'])

print("Original DataFrame:\n", df)

# select all rows for a specific column

print('\nResult:\n',df.iloc[:4])

Its output is as follows −

Original DataFrame:

A B C D

0 -1.152267 2.206954 -0.603874 1.275639

1 -0.799114 -0.214075 0.283186 0.030256

2 -1.823776 1.109537 1.512704 0.831070

3 -0.788280 0.961695 -0.127322 -0.597121

4 0.764930 -1.310503 0.108259 -0.600038

5 -1.683649 -0.602324 -1.175043 -0.343795

6 0.323984 -2.314158 0.098935 0.065528

7 0.109998 -0.259021 -0.429467 0.224148

Result:

A B C D

0 -1.152267 2.206954 -0.603874 1.275639

1 -0.799114 -0.214075 0.283186 0.030256

2 -1.823776 1.109537 1.512704 0.831070

3 -0.788280 0.961695 -0.127322 -0.597121

Example 2

The following example selects the specific data using the integer slicing.

import pandas as pd import numpy as np df = pd.DataFrame(np.random.randn(8, 4), columns = ['A', 'B', 'C', 'D']) # Integer slicing print(df.iloc[:4]) print(df.iloc[1:5, 2:4])

Its output is as follows −

A B C D

0 0.699435 0.256239 -1.270702 -0.645195

1 -0.685354 0.890791 -0.813012 0.631615

2 -0.783192 -0.531378 0.025070 0.230806

3 0.539042 -1.284314 0.826977 -0.026251

C D

1 -0.813012 0.631615

2 0.025070 0.230806

3 0.826977 -0.026251

4 1.423332 1.130568

Example 3

This example selects the data using the slicing through list of values.

import pandas as pd import numpy as np df = pd.DataFrame(np.random.randn(8, 4), columns = ['A', 'B', 'C', 'D']) # Slicing through list of values print(df.iloc[[1, 3, 5], [1, 3]])

Its output is as follows −

B D

1 0.890791 0.631615

3 -1.284314 -0.026251

5 -0.512888 -0.518930

Direct Indexing with Brackets "[]"

Direct indexing with [] is a quick and intuitive way to access data, similar to indexing with Python dictionaries and NumPy arrays. Its often used for basic operations −

Single Column: Access a single column by its name.

Multiple Columns: Select multiple columns by passing a list of column names.

Row Slicing: Slice rows using integer-based indexing.

Example 1

This example demonstrates how to use the direct indexing with brackets for accessing a single column.

import pandas as pd import numpy as np df = pd.DataFrame(np.random.randn(8, 4), columns = ['A', 'B', 'C', 'D']) # Accessing a Single Column print(df['A'])

Its output is as follows −

0 -0.850937 1 -1.588211 2 -1.125260 3 2.608681 4 -0.156749 5 0.154958 6 0.396192 7 -0.397918 Name: A, dtype: float64

Example 2

This example selects the multiple columns using the direct indexing.

import pandas as pd import numpy as np df = pd.DataFrame(np.random.randn(8, 4), columns = ['A', 'B', 'C', 'D']) # Accessing Multiple Columns print(df[['A', 'B']])

Its output is as follows −

A B

0 0.167211 -0.080335

1 -0.104173 1.352168

2 -0.979755 -0.869028

3 0.168335 -1.362229

4 -1.372569 0.360735

5 0.428583 -0.203561

6 -0.119982 1.228681

7 -1.645357 0.331438

Python Pandas - Series

In the Python Pandas library, a Series is one of the primary data structures, that offers a convenient way to handle and manipulate one-dimensional data. It is similar to a column in a spreadsheet or a single column in a database table. In this tutorial you will learn more about Pandas Series and use Series effectively for data manipulation and analysis.

What is a Series?

A Series in Pandas is a one-dimensional labeled array capable of holding data of any type, including integers, floats, strings, and Python objects. It consists of two main components −

- Data: The actual values stored in the Series.

- Index: The labels or indices that correspond to each data value.

A Series is similar to a one-dimensional ndarray (NumPy array) but with labels, which are also known as indices. These labels can be used to access the data within the Series. By default, the index values are integers starting from 0 to the length of the Series minus one, but you can also manually set the index labels.

Creating a Pandas Series

A pandas Series can be created using the following constructor −

class pandas.Series(data, index, dtype, name, copy)

The parameters of the constructor are as follows −

| Sr.No | Parameter & Description |

|---|---|

| 1 |

data Data takes various forms like ndarray, list, or constants. |

| 2 |

index Index values must be unique and hashable, with the same length as data. Default is np.arange(n) if no index is passed. |

| 3 |

dtype Data type. If None, data type will be inferred. |

| 4 |

copy Copy data. Default is False. |

A series object can be created using various inputs like −

- List

- ndarray

- Dict

- Scalar value or constant

Create an Empty Series

If no data is provided to the Series constructor pandas.Series() it will create a basic empty series object.

Example

Following is the example demonstrating creating the empty Series.

#import the pandas library and aliasing as pd

import pandas as pd

s = pd.Series()

# Display the result

print('Resultant Empty Series:\n',s)

Its output is as follows −

Resultant Empty Series: Series([], dtype: object)

Create a Series from ndarray

An ndarray is provided as an input data to the Series constructor, then it will create series with that data. If you want specify the custom index then index passed must be of the same length of input data. If no index is specified, then Pandas will automatically generate a default index from staring 0 to length of the input data, i.e., [0,1,2,3. range(len(array))-1].

Example

Here's the example creating a Pandas Series using an ndarray.

#import the pandas library and aliasing as pd import pandas as pd import numpy as np data = np.array(['a','b','c','d']) s = pd.Series(data) print(s)

Its output is as follows −

0 a 1 b 2 c 3 d dtype: object

We did not pass any index, so by default, it assigned the indexes ranging from 0 to len(data)-1, i.e., 0 to 3.

Example

This example demonstrates applying the custom index to the series object while creating.

#import the pandas library and aliasing as pd

import pandas as pd

import numpy as np

data = np.array(['a','b','c','d'])

s = pd.Series(data,index=[100,101,102,103])

print("Output:\n",s)

Its output is as follows −

Output: 100 a 101 b 102 c 103 d dtype: object

In this example we have provided the index values. Now we can observe the customized indexed values in the output.

Create a Series from Python Dictionary

A dictionary can be passed as input to the pd.Series() constructor to create a series with the dictionary values. If no index is specified, then the dictionary keys are taken in a sorted order to construct the series index. If index is passed, the values in data corresponding to the labels in the index will be pulled out.

Example 1

Here is the basic example of creating the Series object using a Python dictionary.

#import the pandas library and aliasing as pd

import pandas as pd

import numpy as np

data = {'a' : 0., 'b' : 1., 'c' : 2.}

s = pd.Series(data)

print(s)

Its output is as follows −

a 0.0 b 1.0 c 2.0 dtype: float64

Observe − Dictionary keys are used to construct index.

Example 2

In this example a Series object is created with Python dictionary by explicitly specifying the index labels.

#import the pandas library and aliasing as pd

import pandas as pd

import numpy as np

data = {'a' : 0., 'b' : 1., 'c' : 2.}

s = pd.Series(data,index=['b','c','x','a'])

print(s)

Its output is as follows −

b 1.0 c 2.0 d NaN a 0.0 dtype: float64

Observe − Index order is persisted and the missing element is filled with NaN (Not a Number).

Create a Series from Scalar

If you provide a single scalar value as data to the Pd.Series() constructor with specified index labels. Then that single value will be repeated to match the length of provided index object.

Example

Following is the example that demonstrates creating a Series object using a single scalar value.

#import the pandas library and aliasing as pd import pandas as pd import numpy as np s = pd.Series(5, index=[0, 1, 2, 3]) print(s)

Its output is as follows −

0 5 1 5 2 5 3 5 dtype: int64

Python Pandas - Slicing a Series Object

Pandas Series slicing is a process of selecting a group of elements from a Series object. A Series in Pandas is a one-dimensional labeled array that works similarly to the one-dimensional ndarray (NumPy array) but with labels, which are also called indexes.

Pandas Series slicing is very similarly to the Python and NumPy slicing but it comes with additional features, like slicing based on both position and labels. In this tutorial we will learn about the slicing operations on Pandas Series object.

Basics of Pandas Series Slicing

Series slicing can be done by using the [:] operator, which allows you to select subset of elements from the series object by specified start and end points.

Below are the syntax's of the slicing a Series −

Series[start:stop:step]: It selects elements from start to end with specified step value.

Series[start:stop]: It selects items from start to stop with step 1.

Series[start:]: It selects items from start to the rest of the object with step 1.

Series[:stop]: It selects the items from the beginning to stop with step 1.

Series[:]: It selects all elements from the series object.

Slicing a Series by Position

Pandas Series allows you to select the elements based on their position(i.e, Index values), just like Python list object.

Example: Slicing range of values from a Series

Following is the example of demonstrating how to slice a range value from a series object using the positions.

import pandas as pd

import numpy as np

data = np.array(['a', 'b', 'c', 'd'])

s = pd.Series(data)

# Display the Original series

print('Original Series:',s, sep='\n')

# Slice the range of values

result = s[1:3]

# Display the output

print('Values after slicing the Series:', result, sep='\n')

Following is the output of the above code −

Original Series: 0 a 1 b 2 c 3 d dtype: object Values after slicing the Series: 1 b 2 c dtype: object

Example: Slicing the First Three Elements from a Series

This example retrieves the first three elements in the Series using it's position(i.e, index values).

import pandas as pd s = pd.Series([1,2,3,4,5],index = ['a','b','c','d','e']) #retrieve the first three element print(s[:3])

Its output is as follows −

a 1 b 2 c 3 dtype: int64

Example: Slicing the Last Three Elements from a Series

Similar to the above example the following example retrieves the last three elements from the Series using the element position.

import pandas as pd s = pd.Series([1,2,3,4,5],index = ['a','b','c','d','e']) #retrieve the last three element print(s[-3:])

Its output is as follows −

c 3 d 4 e 5 dtype: int64

Slicing a Series by Label

A Pandas Series is like a fixed-size Python dict in that you can get and set values by index labels.

Example: Slicing Group of elements from a Series using the Labels

The following example retrieves multiple elements with slicing the label of a Series.

import pandas as pd s = pd.Series([1,2,3,4,5],index = ['a','b','c','d','e']) # Slice multiple elements print(s['a':'d'])

Its output is as follows −

a 1 b 2 c 3 d 4 dtype: int64

Example: Slicing First Three Elements using the Labels

The following example slice the first few elements using the label of a Series data.

import pandas as pd s = pd.Series([1,2,3,4,5],index = ['a','b','c','d','e']) # Slice multiple elements print(s[:'c'])

Its output is as follows −

a 1 b 2 c 3 dtype: int64

Modifying Values after Slicing

After slicing a Series, you can also modify the values, by assigning the new values to those sliced elements.

Example

The following example demonstrates how to modify the series values after accessing the range values through slice.

import pandas as pd

s = pd.Series([1,2,3,4,5])

# Display the original series

print("Original Series:\n",s)

# Modify the values of first two elements

s[:2] = [100, 200]

print("Series after modifying the first two elements:",s)

Following is the output of the above code −

Original Series: 0 1 1 2 2 3 3 4 4 5 dtype: int64 Series after modifying the first two elements: 0 100 1 200 2 3 3 4 4 5 dtype: int64

Python Pandas - Attributes of a Series Object

Pandas Series is one of the primary data structures, provides a convenient way to handle and manipulate one-dimensional data. It looks similar to a single column in a spreadsheet or a single column in a database table.

Series object attributes are tools that help you get information about series object and its data. Pandas provides multiple attributes to understand and manipulate the data in a Series. In this tutorial you will learn about Pandas Series attributes.

Data Information

These attributes provide information about the data in the Series −

| Sr.No. | Methods & Description |

|---|---|

| 1 | dtype Returns the data type of the underlying data. |

| 2 | dtypes Returns the data type of the underlying data. |

| 3 | nbytes Returns the number of bytes in the underlying data. |

| 4 | ndim Returns the number of dimensions of the underlying data, which is always 1 for a Series. |

| 5 | shape Returns a tuple representing the shape of the underlying data. |

| 6 | size Returns the number of elements in the underlying data. |

| 7 | values Returns the Series as an ndarray or ndarray-like object depending on the data type. |

Data Access

These attributes help in accessing data within the Series −

| Sr.No. | Methods & Description |

|---|---|

| 1 | at Accesses a single value using a row/column label pair. |

| 2 | iat Accesses a single value by integer position. |

| 3 | loc Accesses a group of rows and columns by labels or a boolean array. |

Data Properties

These attributes provide properties and metadata about the Series −

| Sr.No. | Methods & Description |

|---|---|

| 1 |

empty Indicates whether the Series or DataFrame is empty. |

| 2 | flags Gets the properties associated with the Pandas object. |

| 3 | hasnans Returns True if there are any NaN values. |

| 4 | index Returns the index (axis labels) of the Series. |

| 5 | is_monotonic_decreasing Returns True if the values are monotonically decreasing. |

| 6 | is_monotonic_increasing Returns True if the values are monotonically increasing. |

| 7 | is_unique Returns True if all values are unique. |

| 8 | name Returns the name of the Series. |

Other

This category includes attributes that perform a variety of other operations −

| Sr.No. | Methods & Description |

|---|---|

| 1 | array Provides the underlying data of the Series as an ExtensionArray. |

| 2 |

attrs Returns a dictionary of global attributes of the dataset. |

| 3 | axes Returns a list of the row axis labels. |

| 4 |

T Returns the transpose of the Series, which is essentially the same as the original Series. |

Python Pandas - Arithmetic Operations on Series Object

Pandas Series is one of the primary data structures, that stores the one-dimensional labeled data. The data can be any type, such as integers, floats, or strings. One of the primary advantages of using a Pandas Series is the ability to perform arithmetic operations in a vectorized manner. This means arithmetic operations on Series are performed without needing a loop through elements manually.

In this tutorial, we will learn how to apply arithmetic operations like addition(+), subtraction(-), multiplication(*), and division(/) to a single Series or between two Series objects.

Arithmetic Operations on a Series with Scalar Value

Arithmetic operations on a Pandas Series object can be directly applied to an entire Series elements, which means the operation is executed element-wise across all values. This is very similar to how operations work with NumPy arrays.

Following is the list of commonly used arithmetic operations on Pandas Series −

| Operation | Example | Description |

|---|---|---|

| Addition | s + 2 | Adds 2 to each element |

| Subtraction | s - 2 | Subtracts 2 from each element |

| Multiplication | s * 2 | Multiplies each element by 2 |

| Division | s / 2 | Divides each element by 2 |

| Exponentiation | s ** 2 | Raises each element to the power of 2 |

| Modulus | s % 2 | Finds remainder when divided by 2 |

| Floor Division | s // 2 | Divides and floors the quotient |

Example

The following example demonstrates how to applies the all arithmetical operations on a Series object with the scalar values.

import pandas as pd

s = pd.Series([1,2,3,4,5],index = ['a','b','c','d','e'])

# Display the Input Series

print('Input Series\n',s)

# Apply all Arithmetic Operation and Display the Results

print('\nAddition:\n',s+2)

print('\nSubtraction:\n', s-2)

print('\nMultiplication:\n', s * 2)

print('\nDivision:\n', s/2)

print('\nExponentiation:\n', s**2)

print('\nModulus:\n', s%2)

print('\nFloor Division:\n', s//2)

Following is the output of the above code −

Input Series a 1 b 2 c 3 d 4 e 5 dtype: int64 Addition: a 3 b 4 c 5 d 6 e 7 dtype: int64 Subtraction: a -1 b 0 c 1 d 2 e 3 dtype: int64 Multiplication: a 2 b 4 c 6 d 8 e 10 dtype: int64 Division: a 0.5 b 1.0 c 1.5 d 2.0 e 2.5 dtype: float64 Exponentiation: a 1 b 4 c 9 d 16 e 25 dtype: int64 Modulus: a 1 b 0 c 1 d 0 e 1 dtype: int64 Floor Division: a 0 b 1 c 1 d 2 e 2 dtype: int64

Arithmetic Operations Between Two Series

You can perform arithmetical operations between two series objects. Pandas automatically aligns the data by index labels. If one of the Series object does not have an index but not the other, then the resultant value for that index will be NaN.

Example

This example demonstrates applying the arithmetic operations on two series objects.

import pandas as pd

s1 = pd.Series([1,2,3,4,5],index = ['a','b','c','d','e'])

s2 = pd.Series([9, 8, 6, 5], index=['x','a','b','c'])

# Apply all Arithmetic Operations and Display the Results

print('\nAddition:\n',s1+s2)

print('\nSubtraction:\n', s1-s2)

print('\nMultiplication:\n', s1 * s2)

print('\nDivision:\n', s1/s2)

Following is the output of the above code −

Addition: a 9.0 b 8.0 c 8.0 d NaN e NaN x NaN dtype: float64 Subtraction: a -7.0 b -4.0 c -2.0 d NaN e NaN x NaN dtype: float64 Multiplication: a 8.0 b 12.0 c 15.0 d NaN e NaN x NaN dtype: float64 Division: a 0.125000 b 0.333333 c 0.600000 d NaN e NaN x NaN dtype: float64

Python Pandas - Converting Series to Other Objects

Pandas Series is a one-dimensional array-like object containing data of any type, such as integers, floats, and strings. And the data elements is associated with labels (index). In some situations, you need to convert a Pandas Series into different formats for various use cases like creating lists, NumPy arrays, dictionaries, or even converting the Series into a DataFrame.

In this tutorial, we will learn about various methods available in Pandas to convert a Series into different formats such as lists, NumPy arrays, dictionaries, DataFrames, and strings.

Following are the commonly used methods for converting Series into other formats −

| Method | Description |

|---|---|

| to_list() | Converts the Series into a Python list. |

| to_numpy() | Converts the Series into a NumPy array. |

| to_dict() | Converts the Series into a dictionary. |

| to_frame() | Converts the Series into a DataFrame. |

| to_string() | Converts the Series into a string representation for display. |

Converting Series to List

The Series.to_list() method converts a Pandas Series to a Python list, where each element of the Series becomes an element of the returned list. And the type of each element in the list is types as those in the Series.

Example

Here is the example of converting a Pandas Series into a Python list Using the Series.to_list() method.

import pandas as pd

# Create a Pandas Series

s = pd.Series([1, 2, 3])

# Convert Series to a Python list

result = s.to_list()

print("Output:",result)

print("Output Type:", type(result))

Output

Following is the output of the above code −

Output: [1, 2, 3] Output Type: <class 'list'>

Converting Series to NumPy Array

The Pandas Series.to_numpy() method can be used to convert a Pandas Series into a NumPy array. This method provides a additional features like specifying the data type (dtype), handle missing values (na_value), and control whether the result should be a copy or a view.

Example

This example converts a Series into a NumPy array using the Series.to_numpy() method.

import pandas as pd

# Create a Pandas Series

s = pd.Series([1, 2, 3])

# Convert Series to a NumPy Array

result = s.to_numpy()

print("Output:",result)

print("Output Type:", type(result))

Output

Output: [1, 2, 3] Output Type: <class 'numpy.ndarray'>

Converting Pandas Series to a Dictionary

The Pandas Series.to_dict() method is used to convert a Series into a Python dictionary, where each label (index) becomes a key and each corresponding value becomes the dictionary's value.

Example

The following example converts a Series into a Python dictionary using the Series.to_dict() method.

import pandas as pd

# Create a Pandas Series

s = pd.Series([1, 2, 3], index=['a', 'b', 'c'])

# Convert Series to a Python dictionary

result = s.to_dict()

print("Output:",result)

print("Output Type:", type(result))

Output

Output: {'a': 1, 'b': 2, 'c': 3}

Output Type: <class 'dict'>

Converting a Series to DataFrame

The Series.to_frame() method allows you to convert a Series into a DataFrame. Each Series becomes a single column in the DataFrame. This method provides a name parameter to set the column name of the resulting DataFrame.

Example

This example uses the Series.to_frame() method to convert a Series into a Pandas DataFrame with a single column.

import pandas as pd

# Create a Pandas Series

s = pd.Series([1, 2, 3], index=['a', 'b', 'c'])

# Convert Series to a Pandas DataFrame

result = s.to_frame(name='Numbers')

print("Output:\n",result)

print("Output Type:", type(result))

Output

Output:

Numbers

a 1

b 2

c 3

Output Type: <class 'pandas.core.frame.DataFrame'>

Converting Series to Python String

To convert a Pandas Series object to a Python string you can use the he Series.to_string() method, which renders a string representation of the Series.

This method returns a string showing the index and values of the Series. You can customize the output string using various parameters like na_rep (represent missing values), header, index, float_format, length, etc.

Example

This example converts a Series into the Python string representation using the Series.to_string() method.

import pandas as pd

# Create a Pandas Series

s = pd.Series([1, 2, 3], index=['r1', 'r2', 'r3'])

# Convert Series to string representation

result = s.to_string()

print("Output:",repr(result))

print("Output Type:", type(result))

Output

Output: 'r1 1\nr2 2\nr3 3' Output Type: <class 'str'>

Python Pandas - DataFrame

A DataFrame in Python's pandas library is a two-dimensional labeled data structure that is used for data manipulation and analysis. It can handle different data types such as integers, floats, and strings. Each column has a unique label, and each row is labeled with a unique index value, which helps in accessing specific rows.

DataFrame is used in machine learning tasks which allow the users to manipulate and analyze the data sets in large size. It supports the operations such as filtering, sorting, merging, grouping and transforming data.

Features of DataFrame

Following are the features of the Pandas DataFrame −

- Columns can be of different types.

- Size is mutable.

- Labeled axes (rows and columns).

- Can Perform Arithmetic operations on rows and columns.

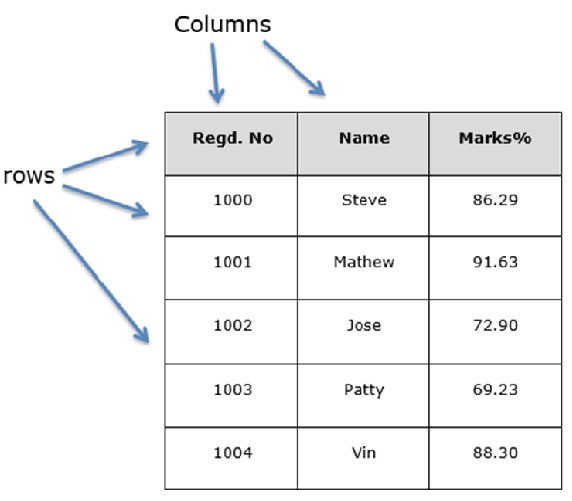

Python Pandas DataFrame Structure

You can think of a DataFrame as similar to an SQL table or a spreadsheet data representation. Let us assume that we are creating a data frame with student's data.

Creating a pandas DataFrame

A pandas DataFrame can be created using the following constructor −

pandas.DataFrame(data=None, index=None, columns=None, dtype=None, copy=None)

The parameters of the constructor are as follows −

| Sr.No | Parameter & Description |

|---|---|

| 1 |

data data takes various forms like ndarray, series, map, lists, dict, constants and also another DataFrame. |

| 2 |

index For the row labels, the Index to be used for the resulting frame is Optional Default np.arange(n) if no index is passed. |

| 3 |

columns This parameter specifies the column labels, the optional default syntax is - np.arange(n). This is only true if no index is passed. |

| 4 |

dtype Data type of each column. |

| 5 |

copy This command (or whatever it is) is used for copying of data, if the default is False. |

Creating a DataFrame from Different Inputs

A pandas DataFrame can be created using various inputs like −

- Lists

- Dictionary

- Series

- Numpy ndarrays

- Another DataFrame

- External input iles like CSV, JSON, HTML, Excel sheet, and more.

In the subsequent sections of this chapter, we will see how to create a DataFrame using these inputs.

Create an Empty DataFrame

An empty DataFrame can be created using the DataFrame constructor without any input.

Example

Following is the example creating an empty DataFrame.

#import the pandas library and aliasing as pd import pandas as pd df = pd.DataFrame() print(df)

Its output is as follows −

Empty DataFrame Columns: [] Index: []

Create a DataFrame from Lists

The DataFrame can be created using a single list or a list of lists.

Example

The following example demonstrates how to create a pandas DataFrame from a Python list object.

import pandas as pd data = [1,2,3,4,5] df = pd.DataFrame(data) print(df)

Its output is as follows −

0

0 1

1 2

2 3

3 4

4 5

Example

Here is another example of creating a Pandas DataFrame from the Python list of list.

import pandas as pd data = [['Alex',10],['Bob',12],['Clarke',13]] df = pd.DataFrame(data,columns=['Name','Age']) print(df)

Its output is as follows −

Name Age

0 Alex 10

1 Bob 12

2 Clarke 13

Create a DataFrame from Dict of ndarrays / Lists

All the ndarrays must be of same length. If index is passed, then the length of the index should equal to the length of the arrays.

If no index is passed, then by default, index will be range(n), where n is the array length.

Example

Here is the example of creating the DataFrame from a Python dictionary.

import pandas as pd

data = {'Name':['Tom', 'Jack', 'Steve', 'Ricky'],'Age':[28,34,29,42]}

df = pd.DataFrame(data)

print(df)

Its output is as follows −

Age Name

0 28 Tom

1 34 Jack

2 29 Steve

3 42 Ricky

Note − Observe the values 0,1,2,3. They are the default index assigned to each using the function range(n).

Example

Let us now create an indexed DataFrame using arrays.

import pandas as pd

data = {'Name':['Tom', 'Jack', 'Steve', 'Ricky'],'Age':[28,34,29,42]}

df = pd.DataFrame(data, index=['rank1','rank2','rank3','rank4'])

print(df)

Its output is as follows −

Age Name

rank1 28 Tom

rank2 34 Jack

rank3 29 Steve

rank4 42 Ricky

Note − Observe, the index parameter assigns an index to each row.

Create a DataFrame from List of Dicts

List of Dictionaries can be passed as input data to create a DataFrame. The dictionary keys are by default taken as column names.

Example

The following example shows how to create a DataFrame by passing a list of dictionaries.

import pandas as pd

data = [{'a': 1, 'b': 2},{'a': 5, 'b': 10, 'c': 20}]

df = pd.DataFrame(data)

print(df)

Its output is as follows −

a b c

0 1 2 NaN

1 5 10 20.0

Note − Observe, NaN (Not a Number) is appended in missing areas.

Example

The following example shows how to create a DataFrame with a list of dictionaries, row indices, and column indices.

import pandas as pd

data = [{'a': 1, 'b': 2},{'a': 5, 'b': 10, 'c': 20}]

#With two column indices, values same as dictionary keys

df1 = pd.DataFrame(data, index=['first', 'second'], columns=['a', 'b'])

#With two column indices with one index with other name

df2 = pd.DataFrame(data, index=['first', 'second'], columns=['a', 'b1'])

print(df1)

print(df2)

Its output is as follows −

#df1 output

a b

first 1 2

second 5 10

#df2 output

a b1

first 1 NaN

second 5 NaN

Note − Observe, df2 DataFrame is created with a column index other than the dictionary key; thus, appended the NaNs in place. Whereas, df1 is created with column indices same as dictionary keys, so NaNs appended.

Create a DataFrame from Dict of Series

Dictionary of Series can be passed to form a DataFrame. The resultant index is the union of all the series indexes passed.

Example

Here is the example −

import pandas as pd

d = {'one' : pd.Series([1, 2, 3], index=['a', 'b', 'c']),

'two' : pd.Series([1, 2, 3, 4], index=['a', 'b', 'c', 'd'])}

df = pd.DataFrame(d)

print(df)

Its output is as follows −

one two

a 1.0 1

b 2.0 2

c 3.0 3

d NaN 4

Note − Observe, for the series one, there is no label d passed, but in the result, for the d label, NaN is appended with NaN.

Example

Another example of creating a Pandas DataFrame from a Series −

import pandas as pd data = pd.Series([1, 2, 3, 4], index=['a', 'b', 'c', 'd']) df = pd.DataFrame(data) print(df)

Its output is as follows −

0

a 1

b 2

c 3

d 4

Python Pandas - Accessing DataFrame

Pandas DataFrame is a two-dimensional labeled data structure with rows and columns labels, it is looks and works similar to a table in a database or a spreadsheet. To work with the DataFrame labels, pandas provides simple tools to access and modify the rows and columns using index the index and columns attributes of a DataFrame.

In this tutorial, we will learn about how to access and modify rows and columns in a Pandas DataFrame using the index and columns attributes of the DataFrame.

Accessing the DataFrame Rows Labels

The index attribute in Pandas is used to access row labels in a DataFrame. It returns an index object containing the series of labels corresponding to the data represented in the each row of the DataFrame. These labels can be integers, strings, or other hashable types.

Example

The following example access the DataFrame row labels using the pd.index attribute.

import pandas as pd

# Create a DataFrame

df = pd.DataFrame({

'Name': ['Steve', 'Lia', 'Vin', 'Katie'],

'Age': [32, 28, 45, 38],

'Gender': ['Male', 'Female', 'Male', 'Female'],

'Rating': [3.45, 4.6, 3.9, 2.78]},

index=['r1', 'r2', 'r3', 'r4'])

# Access the rows of the DataFrame

result = df.index

print('Output Accessed Row Labels:', result)

Output

Following is the output of the above code −

Output Accessed Row Labels: Index(['r1', 'r2', 'r3', 'r4'], dtype='object')

Modifying DataFrame Row Labels

With the index attribute you can also modify the row labels of a DataFrame.

Example

Here is an example that demonstrates accessing and modifying the row labels of the Pandas DataFrame using the index attribute.

import pandas as pd

# Create a DataFrame

df = pd.DataFrame({

'Name': ['Steve', 'Lia', 'Vin', 'Katie'],

'Age': [32, 28, 45, 38],

'Gender': ['Male', 'Female', 'Male', 'Female'],

'Rating': [3.45, 4.6, 3.9, 2.78]},

index=['r1', 'r2', 'r3', 'r4'])

# Display the Input DataFrame

print('Input DataFrame:\n', df)

# Modify the Row labels of the DataFrame

df.index = [100, 200, 300, 400]

print('Output Modified DataFrame with the updated index labels:\n', df)

Output

On executing the above code you will get the following output −

Input DataFrame:

Name Age Gender Rating

r1 Steve 32 Male 3.45

r2 Lia 28 Female 4.60

r3 Vin 45 Male 3.90

r4 Katie 38 Female 2.78

Output Modified DataFrame with the updated index labels:

Name Age Gender Rating

100 Steve 32 Male 3.45

200 Lia 28 Female 4.60

300 Vin 45 Male 3.90

400 Katie 38 Female 2.78

Accessing The DataFrame Columns Labels

The Pandas pd.columns attribute is used to access the labels of the columns in the DataFrame. You can access and modify these column labels similarly to how we work with row labels.

Example

The following example demonstrates how to access the DataFrame column labels using the pd.columns attribute.

import pandas as pd

# Create a DataFrame

df = pd.DataFrame({

'Name': ['Steve', 'Lia', 'Vin', 'Katie'],

'Age': [32, 28, 45, 38],

'Gender': ['Male', 'Female', 'Male', 'Female'],

'Rating': [3.45, 4.6, 3.9, 2.78]},

index=['r1', 'r2', 'r3', 'r4'])

# Access the column labels of the DataFrame

result = df.columns

print('Output Accessed column Labels:', result)

Output

Following is the output of the above code −

Output Accessed column Labels: Index(['Name', 'Age', 'Gender', 'Rating'], dtype='object')

Modifying the DataFrame Column Labels

Column labels can be modified using the columns attribute.

Example

This example demonstrates how to access and modify the DataFrame column labels using the pd.columns attribute.

import pandas as pd

# Create a DataFrame

df = pd.DataFrame({

'Name': ['Steve', 'Lia', 'Vin', 'Katie'],

'Age': [32, 28, 45, 38],

'Gender': ['Male', 'Female', 'Male', 'Female'],

'Rating': [3.45, 4.6, 3.9, 2.78]},

index=['r1', 'r2', 'r3', 'r4'])

# Display the Input DataFrame

print('Input DataFrame:\n', df)

# Modify the Column labels of the DataFrame

df.columns = ['Col1', 'Col2', 'Col3', 'Col4']

print('Output Modified DataFrame with the updated Column Labels\n:', df)

Output

Following is the output of the above code −

Input DataFrame:

Name Age Gender Rating

r1 Steve 32 Male 3.45

r2 Lia 28 Female 4.60

r3 Vin 45 Male 3.90

r4 Katie 38 Female 2.78

Output Modified DataFrame with the updated Column Labels:

Col1 Col2 Col3 Col4

r1 Steve 32 Male 3.45

r2 Lia 28 Female 4.60

r3 Vin 45 Male 3.90

r4 Katie 38 Female 2.78

Python Pandas - Slicing a DataFrame Object

Pandas DataFrame slicing is a process of extracting specific rows, columns, or subsets of data based on both position and labels. DataFrame slicing is a common operation while working with large datasets, it is similar to Python lists and NumPy ndarrays, DataFrame slicing uses the [] operator and specific slicing attributes like .iloc[] and .loc[] to retrieve data efficiently.

In this tutorial, we will learn about how to slice Pandas DataFrames using both positional and label-based indexing.

Introduction to Pandas DataFrame Slicing

Pandas DataFrame slicing is performed using two main attributes, which are −

.iloc[]: For slicing based on position (integer-based indexing).

.loc[]: For slicing based on labels (index labels or column labels).

Let's learn about all possible ways of slicing a Pandas DataFrame.

Slicing a DataFrame by Position

The Pandas DataFrame.iloc[] attribute used to slice a DataFrame based on the integer position (i.e, integer-based indexing) of rows and columns.

Following is the syntax of slicing a DataFrame using the .iloc[] attribute −

DataFrame.iloc[row_start:row_end, column_start:column_end]

Where, row_start and row_end are indicates the start and end integer-based index values of the DataFrame rows. Similarly, column_start and column_end are the column index values.

Example: Slicing DataFrame Rows by Position

The following example demonstrates how to slice the DataFrame rows using the DataFrame.iloc[] attribute.

import pandas as pd

# Create a Pandas DataFrame

df = pd.DataFrame([['a','b'], ['c','d'], ['e','f'], ['g','h']], columns=['col1', 'col2'])

# Display the DataFrame

print("Input DataFrame:")

print(df)

# Slice rows based on position

result = df.iloc[1:3, :]

print("Output:")

print(result)